How To Clean Up Memory In Python After Running Test

Fugue uses Python extensively throughout our cloud security SaaS production and in our back up tools, due to its ease-of-utilise, extensive bundle library, and powerful language tools. One thing nosotros've learned from edifice complex software for the cloud is that a language is only every bit good every bit its debugging and profiling tools. Logic errors, CPU spikes, and memory leaks are inevitable, only a good debugger, CPU profiler, and memory profiler can brand finding these errors significantly easier and faster, letting our developers get back to creating Fugue'southward dynamic cloud orchestration and enforcement system. Permit's await at a example in point.

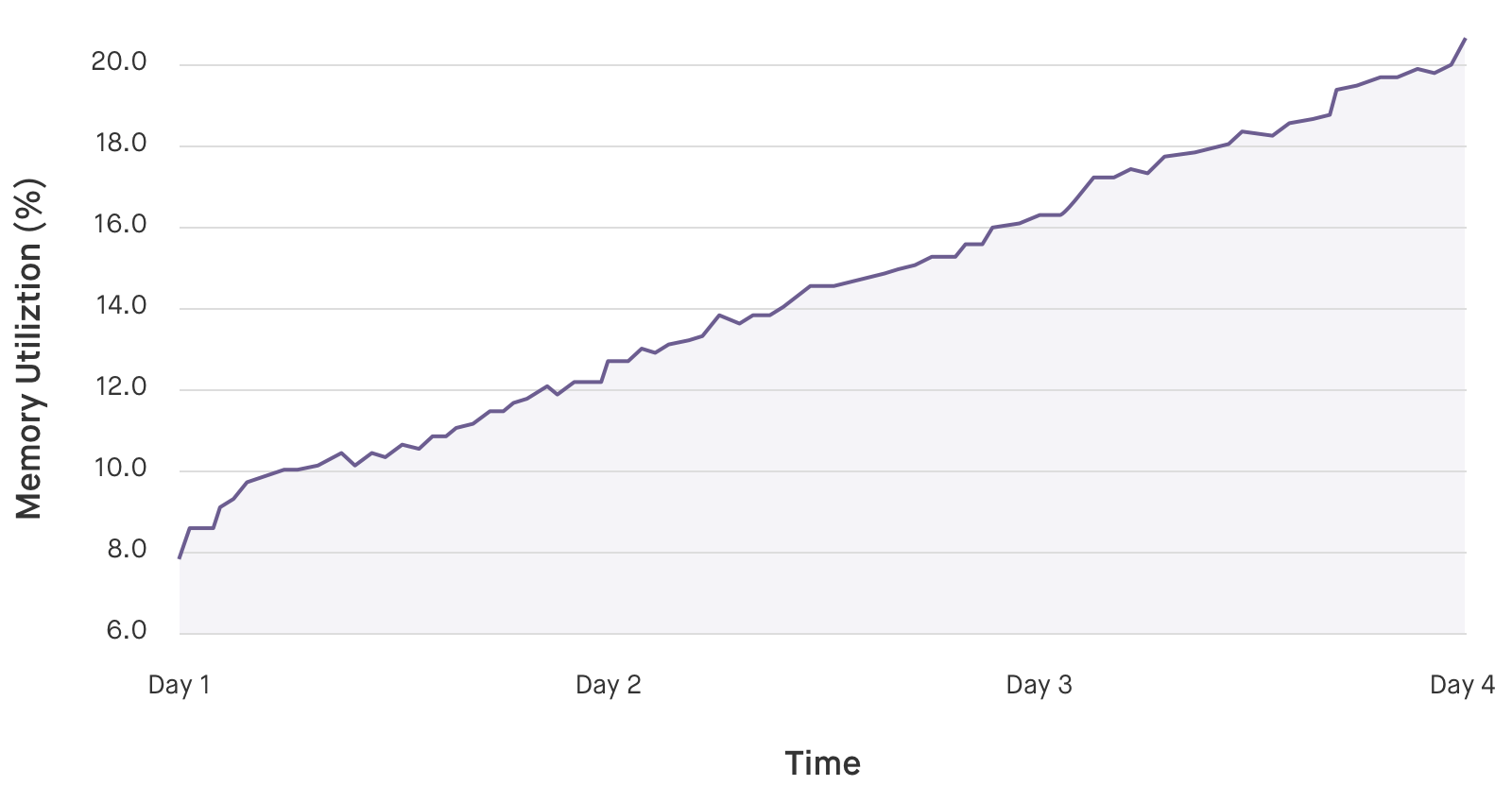

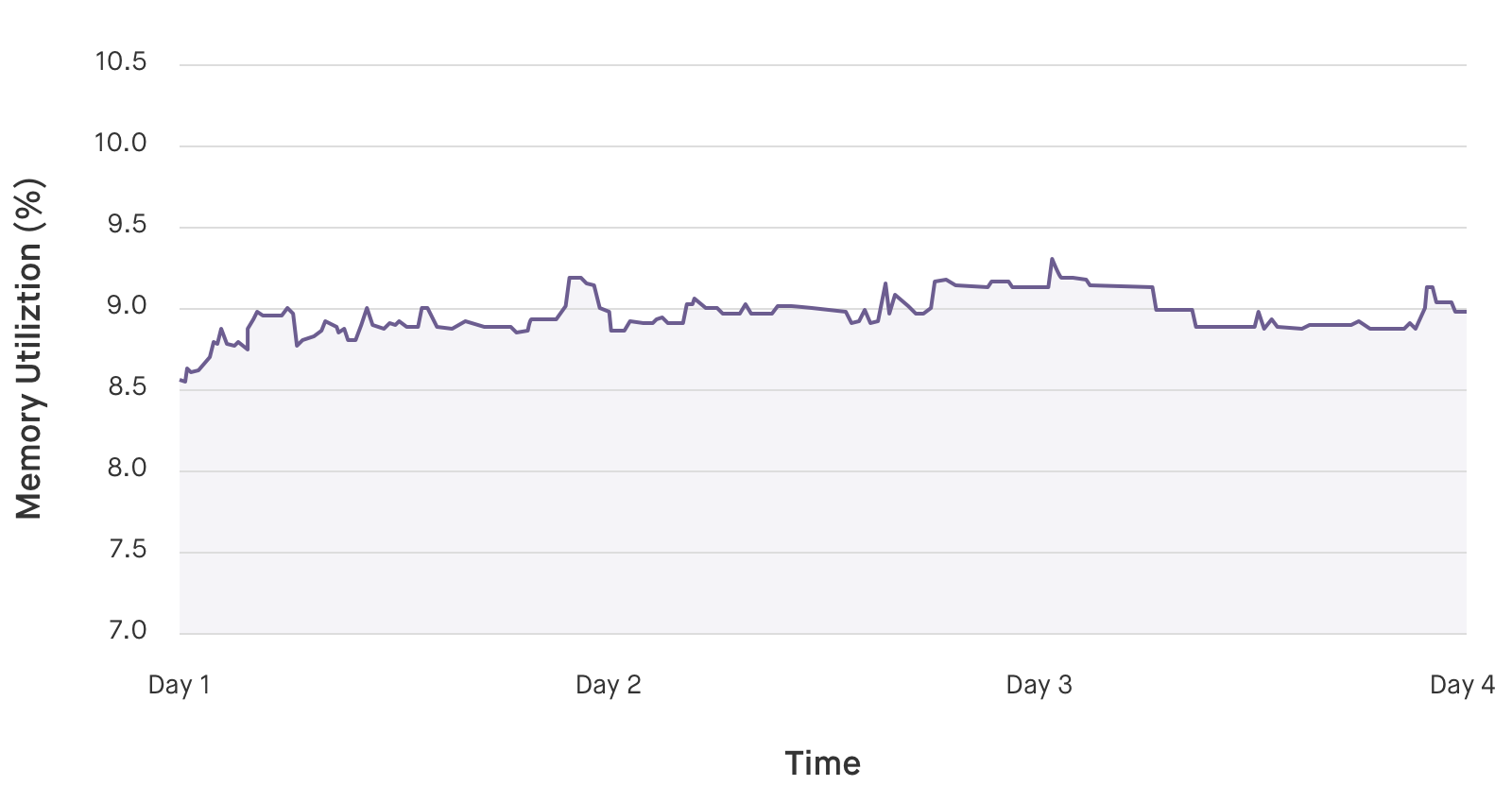

In the fall, our metrics reported that a Python component of Fugue called the reflector was experiencing random restarts and instability later on a few days of uptime. Looking at retention usage showed that the reflector's memory footprint increased monotonically and continuously, indicating a memory leak. tracemalloc, a powerful retentiveness tracking tool in the Python standard library, made it possible to speedily diagnose and fix the leak. We discovered that the retentivity leak was related to our use of requests, a popular third-party Python HTTP library. Rewriting the component to use urllib from the Python standard library eliminated the retentivity leak. In this blog, nosotros'll explore the details.

Memory Allocation in Python

In most scenarios, at that place's no need to understand retentiveness management in Python beyond knowing that the interpreter manages retention for you. Even so, when writing large, complex Python programs with loftier stability requirements, it'south useful to peek behind the mantle to empathise how to write code that interacts well with Python's retentiveness management algorithms. Notably, Python uses reference counting and garbage collection to complimentary retentivity blocks, and only frees memory to the system when certain internal requirements are met. A pure Python script will never take direct control over retentivity allocation in the interpreter. If direct control over memory allocation is desired, the interpreter's memory allocation can be bypassed past writing or using an extension. For example, numpy manages retentivity for large data arrays using its ain memory allocator.

Fundamentally, Python is a garbage-nerveless language that uses reference counting. The interpreter automatically allocates memory for objects equally they are created and tracks the number of references to those objects in a information construction associated with the object itself. This memory will be freed when the reference count for those objects reaches zero. In add-on, garbage drove will observe cycles and remove objects that are simply referenced in cycles. Every byte of memory allocated inside the Python interpreter is able to exist freed between these 2 mechanisms, but no claims can be made virtually retentiveness allocated in extensions.

Python manages its own heap, split up from the arrangement heap. Retentiveness is allocated in the Python interpreter past different methods according to the type of the object to be created. Scalar types, such as integers and floats, utilise dissimilar retentivity allocation methods than composite types, such as lists, tuples, and dictionaries. In general, memory is allocated on the Python heap in fixed-size blocks, depending on the blazon. These blocks are organized into pools, which are further organized into arenas. Memory is pre-allocated using arenas, pools, and blocks, which are and then used to store data as needed over the class of program's execution. Since these blocks, pools, and arenas are kept in Python'south own heap, freeing a memory block merely marks it as bachelor for futurity utilise in the interpreter. Freeing memory in Python does not immediately gratuitous the retentiveness at the system level. When an entire arena is marked as free, its memory is released by the Python interpreter and returned to the system. However, this may occur infrequently due to memory fragmentation.

Due to these abstractions, memory usage in Python often exhibits high-water-marker beliefs, where elevation memory usage determines the memory usage for the remainder of execution, regardless of whether that retentiveness is actively beingness used. Furthermore, the human relationship between retentivity being "freed" in code and beingness returned to the system is vague and hard to predict. These behaviors make completely understanding the retentivity usage of complex Python programs notoriously hard.

Retention Profiling Using tracemalloc

tracemalloc is a package included in the Python standard library (every bit of version iii.4). It provides detailed, block-level traces of memory allocation, including the total traceback to the line where the memory allocation occurred, and statistics for the overall memory behavior of a plan. The documentation is available hither and provides a good introduction to its capabilities. The original Python Enhancement Proposal (PEP) introducing it also has some insight on its design.

tracemalloc tin exist used to locate high-memory-usage areas of code in two ways:

- looking at cumulative statistics on retention use to identify which object allocations are using the most memory, and

- tracing execution frames to identify where those objects are allocated in the code.

Module-level Memory Usage

We beginning by tracing the retentiveness usage of the unabridged program, so nosotros can identify, at a high level, which objects are using the most memory. This will hopefully provide us with plenty insight to know where and how to look more deeply. The following wrapper starts tracing and prints statistics when Ctrl-C is striking:

import tracemalloctracemalloc.get-go(10) try: run_reflector() except: snapshot = tracemalloc.take_snapshot() top_n(25, snapshot, trace_type='filename') tracemalloc.starting time(ten) starts memory tracing, while saving ten frames of traceback for each entry. The default is ane, but saving more traceback frames is useful if you program on using tracebacks to locate retentiveness leaks, which will be discussed afterwards. tracemalloc.take_snapshot() takes a snapshot of currently allocated memory in the Python heap. It stores the number of allocated blocks, their size, and tracebacks to place which lines of code allocated which blocks of memory. One time a snapshot is created, we can compute statistics on memory utilise, compare snapshots, or save them to analyze later. top_n is a helper function I wrote to pretty impress the output from tracemalloc. Here, I inquire for the peak 25 memory allocations in the snapshot, grouped past filename. Later on running for a few minutes, the output looks similar this:

[ Top 25 with filename tracebacks ] 197618 blocks 17.02311134338379 MB/Users/mike/.pyenv/versions/3.4.two/lib/python3.4/collections/__init__.py:0: size=17.0 MiB, count=197618, average=90 B105364 blocks 11.34091567993164 MB frozen importlib._bootstrap:0: size=11.3 MiB, count=105364, boilerplate=113 B60339 blocks ix.233230590820312 MB/Users/mike/.pyenv/versions/iii.4.two/lib/python3.iv/json/decoder.py:0: size=9455 KiB, count=60339, average=160 B... This shows the cumulative amount of retention allocated by the component over the unabridged runtime, grouped by filename. At this level of granularity, it'due south hard to brand sense of the results. For case, the first line shows us that 17 MB of collections objects are created, only this view doesn't provide enough detail for us to know which objects, or where they're existence used. A dissimilar approach is needed to isolate the problem.

Understanding tracemalloc Output

tracemalloc shows the net memory usage at the time a retention snapshot is taken. When comparing two snapshots, it shows the net memory usage betwixt the 2 snapshots. If memory is allocated and freed between snapshots, it won't exist shown in the output. Therefore, if snapshots are created at the same point in a loop, any memory allocations visible in the differences betwixt 2 snapshots are contributing to the long-term total amount of memory used, rather than being a temporary allocation made in the course of execution.

In the case of reference cycles that require garbage collection, uncollected cycles are recorded in the output, while collected cycles are not. Any blocks freed past the garbage collector in the time covered past a snapshot volition exist recorded as freed memory. Therefore, forcing garbage drove with gc.collect() earlier taking a snapshot will reduce noise in the output.

Per-Iteration Retention Usage

Since we're looking for a memory leak, it'southward useful to sympathise how the memory usage of our program changes over time. We can instrument the chief loop of the component, to see how much retentivity is allocated in each iteration, past calling the following method from the chief loop:

def collect_stats(cocky): self.snapshots.append(tracemalloc.take_snapshot()) if len(cocky.snapshots) 1: stats = cocky.snapshots[-i].filter_traces(filters).compare_to(self.snapshots[-2], 'filename') for stat in stats[:10]: impress("{} new KiB {} total KiB {} new {} total retentiveness blocks: ".format(stat.size_diff/1024, stat.size / 1024, stat.count_diff ,stat.count)) for line in stat.traceback.format(): print(line) This code takes a memory snapshot and saves it, then uses snapshot.compare_to(other_snapshot, group_by='filename') to compare the newest snapshot with the previous snapshot, with results grouped by filename. After a few iterations to warm upward retentivity, the output looks like this:

[ Top v with filename tracebacks ]190.7421875 new KiB 1356.5634765625 total KiB 1930 new 13574 total memory blocks: (ane) File "/Users/mike/.pyenv/versions/three.4.2/lib/python3.4/linecache.py", line 02.1328125 new KiB 12.375 total KiB 32 new 86 total memory blocks: (2) File "/Users/mike/.pyenv/versions/3.4.2/lib/python3.4/tracemalloc.py", line 01.859375 new KiB xviii.7001953125 total KiB 3 new 53 total retentivity blocks: (3) File "/Users/mike/.pyenv/versions/venv/lib/python3.4/site-packages/requests/packages/urllib3/connection.py", line 0-1.71875 new KiB 34.5224609375 total KiB -ii new 91 total retentivity blocks: File "/Users/mike/.pyenv/versions/venv/lib/python3.four/site-packages/requests/packages/urllib3/connectionpool.py", line 01.66015625 new KiB 61.662109375 total KiB xviii new 260 full memory blocks: File "/Users/mike/.pyenv/versions/3.iv.ii/lib/python3.4/urllib/parse.py", line 0 The linecache (1) and tracemalloc (two) allocations are part of the instrumentation, only we tin can also see some memory allocations made by the requests HTTP package (3) that warrant further investigation. Recall that tracemalloc tracks internet memory usage, so these memory allocations are accumulating on each iteration. Although the private allocations are small and don't jump out as problematic, the retentivity leak only becomes credible over the course of a few days, and then information technology'southward probable to exist a case of modest losses adding up.

Filtering Snapshots

Now that we take an idea of where to look, we can use tracemalloc'southward filtering capabilities to show only retention allocations related to the requests package:

from tracemalloc import Filter filters = [Filter(inclusive=True, filename_pattern="*requests*")] filtered_stats = snapshot.filter_traces(filters).compare_to(old_snapshot.filter_traces(filters), 'traceback') for stat in stats[:10]: impress("{} new KiB {} full KiB {} new {} full memory blocks: ".format(stat.size_diff/1024, stat.size / 1024, stat.count_diff ,stat.count)) for line in stat.traceback.format(): print(line) snapshot.filter_traces() takes a list of Filters to apply to the snapshot. Here, we create a Filter in inclusive mode, so it includes but traces that match the filename_pattern. When inclusive is False, the filter excludes traces that match the filename_pattern. The filename_pattern uses UNIX-mode wildcards to match filenames in the traceback. In this instance, the wildcards in "requests" match occurrences of "requests" in the middle of a path, such equally "/Users/mike/.pyenv/versions/venv/lib/python3.4/site-packages/requests/sessions.py".

We so use compare_to() to compare the results to the previous snapshot. The filtered output is below:

48.7890625 new KiB 373.974609375 total KiB 4 new 1440 full memory blocks: (4) File "/Users/mike/.pyenv/versions/venv/lib/python3.4/site-packages/requests/structures.py", line 01.46875 new KiB xvi.2939453125 total KiB 2 new 49 total memory blocks: File "/Users/mike/.pyenv/versions/venv/lib/python3.4/site-packages/requests_unixsocket/__init__.py", line 0 -1.4453125 new KiB 34.2802734375 total KiB -ii new 96 full retention blocks: (5) File "/Users/mike/.pyenv/versions/venv/lib/python3.4/site-packages/requests/sessions.py", line 0-0.859375 new KiB 31.8505859375 full KiB -ane new 85 total retentiveness blocks: File "/Users/mike/.pyenv/versions/venv/lib/python3.4/site-packages/requests/packages/urllib3/connectionpool.py", line 00.6484375 new KiB 20.8330078125 total KiB 1 new 56 total retentivity blocks: File "/Users/mike/.pyenv/versions/venv/lib/python3.four/site-packages/requests/packages/urllib3/connexion.py", line 0 With the Filter in place, nosotros can clearly run into how requests is using memory. Line (4) shows that roughly fifty KiB of retentivity is lost in requests on each iteration of the main loop. Notation that negative retentivity allocations, such as (5), are visible in this output. These allocations are freeing memory allocated in previous loop iterations.

Tracking Downwards Retentivity Allocations

To determine which uses of requests are leaking retentivity, we can have a detailed await at where problematic memory allocations occur by calling compare_to() with traceback instead of filename, while using a Filter to narrow down the output:

stats = snapshot.filter_traces(filters).compare_to(old_snapshot.filter_traces(filters), 'traceback') This prints 10 frames of traceback (since we started tracing with tracemalloc.start(x)) for each entry in the output, a truncated instance of which is below:

five memory blocks: 4.4921875 KiB File "/Users/mike/.pyenv/versions/venv/lib/python3.4/site-packages/requests/sessions.py", line 585 r = adapter.send(request, **kwargs) File "/Users/mike/.pyenv/versions/venv/lib/python3.4/site-packages/requests/sessions.py", line 475 resp = cocky.transport(prep, **send_kwargs) File "/Users/mike/.pyenv/versions/venv/lib/python3.4/site-packages/requests_unixsocket/__init__.py", line 46 render session.request(method=method, url=url, **kwargs) File "/Users/mike/.pyenv/versions/venv/lib/python3.iv/site-packages/requests_unixsocket/__init__.py", line 60 return request('postal service', url, data=information, json=json, **kwargs) The full traceback gives u.s. the ability to trace backwards from retentivity allocations to the lines in our project code that generate them. In the instance of this component, our uses of requests came from an internal storage library that used an HTTP API. Rewriting the library to employ urllib straight eliminated the retention leak.

urllib. Retention Profiling: Art or Science?

tracemalloc is a powerful tool for understanding the retentiveness usage of Python programs. It helped us empathize module-level memory usage, find out which objects are being allocated the nearly, and it demonstrated how the reflector's retentivity usage changed on a per-iteration basis. It comes with useful filtering tools and gives united states of america the ability to see the full traceback for any memory allotment. Despite all of its features, however, finding memory leaks in Python tin can withal feel like more than of an art than a science. Memory profilers requite usa the ability to see how retentivity is being used, but frequently it's difficult to discover the exact retentiveness allotment that is causing problems. It's up to us to synthesize the information we get from our tools into a decision nearly the memory beliefs of the program, so make a determination about what actions to take from there.

Nosotros use nearly every available Python tool (exam frameworks, cProfile, etc.) to make Fugue's organisation reliable, performant, and like shooting fish in a barrel to maintain. The broker and reflector both have advantage of Python's introspection to brand judgments about dynamic calls to the AWS API, which allows us to focus on logic rather than coding exhaustive cases. Fugue leverages the strengths of Python where it makes sense in the system, which ultimately means more product stability and extensibility for end-users.

Source: https://www.fugue.co/blog/diagnosing-and-fixing-memory-leaks-in-python.html

Posted by: leonardwourease.blogspot.com

0 Response to "How To Clean Up Memory In Python After Running Test"

Post a Comment